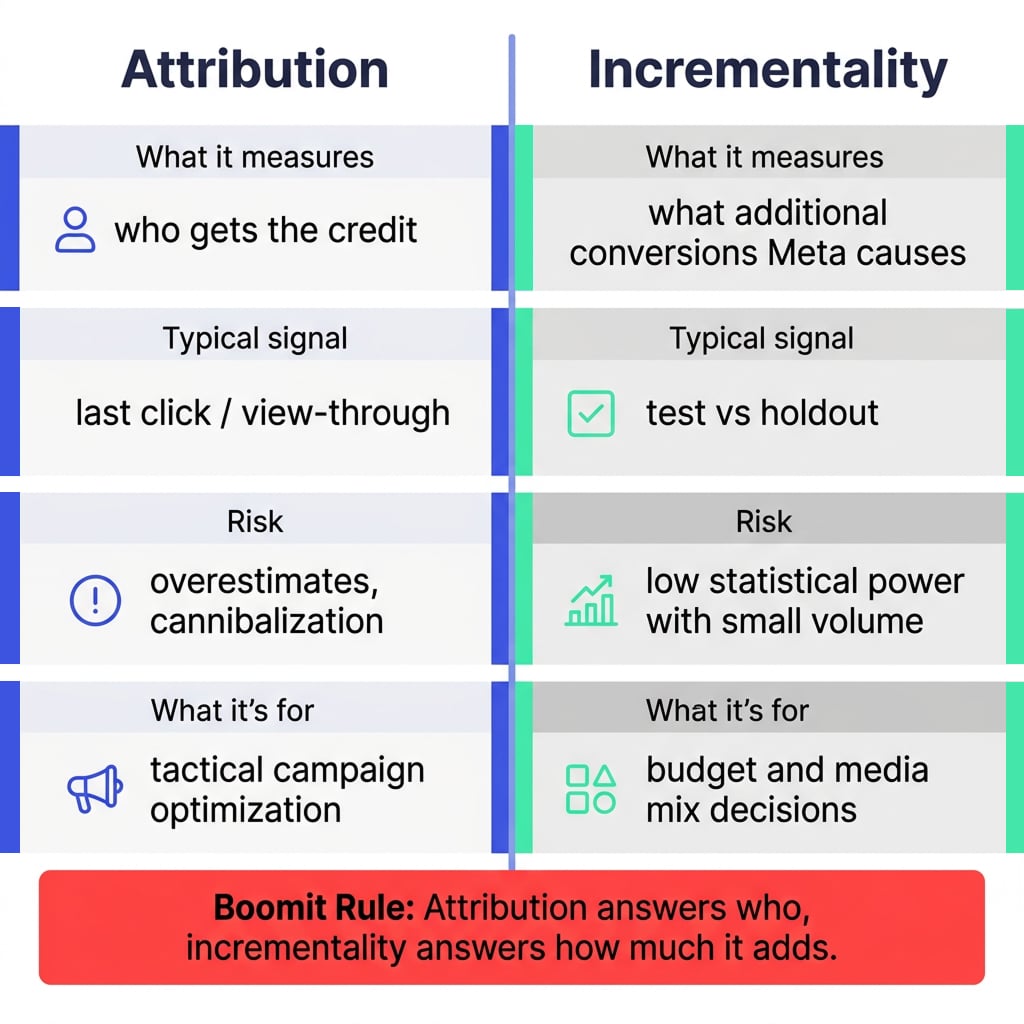

Incrementality is the difference between “my ads were attributed conversions” and “my ads caused additional conversions.” In Meta, that difference isn’t academic: it’s the line between optimizing spend with confidence and making decisions on metrics that can be inflated by attribution bias or demand that would have happened anyway.

This manual is for Growth and Performance teams already running campaigns on Meta and needing a concrete answer: how many sales, leads, or in-app actions is Meta driving incrementally, and how much should I invest based on that?

We’ll cover what incrementality is, when to measure it, how to set up a Conversion Lift, and how to read it to turn results into spend decisions.

What Meta Ads incrementality is and what it actually measures

Incrementality is the net causal impact of advertising: conversions that happen because of ad exposure and would not have happened in a no-ads scenario (or with less exposure).

In Meta, the standard way to measure incrementality is Conversion Lift, an experiment that creates an exposed group (test) and a non-exposed group (holdout/control) to estimate incremental “lift.” The key: incrementality measures causality. Attribution (click/view) doesn’t necessarily.

When it makes sense to measure incrementality and when it doesn’t

It makes sense to measure incrementality when:

- You’ve already scaled and the next step is moving budget “for real” (not just micro-optimizations).

- You’re making a big change: new country, new onboarding, new value proposition, a new creative mix, or a step-change in spend.

- You have real concerns about cannibalization: sales that were already coming from organic, brand, or CRM.

It doesn’t make sense (or tends to be weak) when:

- Your volume is too low and the test is underpowered (no statistical signal).

- You’re changing too many things at once and can’t isolate the treatment.

- Your baseline measurement is broken (bad event instrumentation or inconsistency across platforms).

Other channels don’t invalidate the test

An incrementality test doesn’t require turning off the ecosystem. Google, TikTok, influencers, CRM, organic, or offline can keep running as long as they stay reasonably stable and affect test and holdout similarly.

The right question isn’t “who gets credit for the conversion?” It’s: with the real mix running, what does Meta add on top of what other channels already generate?

The most common case: “Google Search got the last click”

Typical example: someone sees an Instagram ad, then searches the brand on Google and converts via Search. In GA4, AppsFlyer, Adjust, or Google Ads, that conversion may show as attributed to Google.

For incrementality, the last click doesn’t matter. What matters is whether the conversion happened in the test group or the holdout group. If Meta correctly receives the conversion event (via Pixel, CAPI, SDK, MMP, or offline events), it can count that conversion inside the experiment even if Google captured the final click using the last click attribution model.

Meta test types: Conversion Lift vs A/B vs holdout

In practice, there are three different questions — and each calls for a different test:

- “Does this create more incremental conversions?”

→ Conversion Lift (best when you need causality). - “Which variant performs better inside the system?”

→ A/B test (e.g., creative A vs B, structure A vs B). It doesn’t always answer pure incrementality; it compares within the delivery environment. - “What happens if I turn off / reduce spend on X?”

→ Holdout / geo-holdout (depending on whether you can isolate geos or audiences).

Step-by-step manual: how to set up a Conversion Lift in Meta

1) Define the hypothesis

Concrete examples:

- “Increasing budget by 30% in Prospecting with UGC creatives increases incremental conversions with acceptable efficiency.”

- “Optimizing for a deeper event improves incrementality, even if attributed CPA goes up.”

The hypothesis defines what you’re testing: campaign, ad set, event, creatives, or strategy.

2) Ensure minimum viable measurement

Before the experiment, validate:

- A consistent conversion event (lead, purchase, first deposit, etc.).

- A conversion window and latency that matches your cycle (fintech often has more delay).

- Stable tracking configuration (Pixel / SDK / server-side if applicable).

If this isn’t solid, the test can end up measuring noise.

3) Configure the experiment in Experiments / Ads Manager

At a high level, you’ll:

- Go to tests/experiments.

- Select Conversion Lift.

- Choose which campaigns/ad sets are included.

- Select the conversion event to evaluate.

- Set duration and holdout size.

4) Define holdout size and power

There’s no universal magic number. But there is one reality: if the test isn’t powered, you won’t get a usable signal.

Rule of thumb: the more “saturated” your mix is, the more likely the holdout converts too — and the harder it is to detect lift (you need more volume).

A simple example:

| Scenario | Holdout | Test | Estimated lift |

|---|---|---|---|

| Simple ecosystem | 1,000 conversions | 1,250 conversions | +25% |

| Saturated ecosystem | 1,800 conversions | 1,950 conversions | +8.3% |

In both cases Meta adds value. In the second, the ecosystem already generates many conversions without Meta, so the marginal lift looks smaller and may require more spend/duration to detect.

5) Control “competing ads” and overlaps

Classic issue: the holdout gets contaminated because the user is exposed elsewhere (another campaign, another ad account, a partner). Another common issue is leaving very similar campaigns outside the experiment and ending up measuring “marginality” instead of Meta as a channel.

The question isn’t only “what do I include?” but also “what am I leaving out that looks too similar to what I’m testing?”

6) Run the test without touching the wheel

During the experiment:

- Avoid major structural changes (budgets, targeting, creatives) unless they’re part of the planned treatment.

- Document any unavoidable changes (product, pricing, tracking outages, etc.).

Campaign-level test vs “Meta as a channel” and the “account-level” myth

In the most common self-serve setup, Conversion Lift is built by selecting specific campaigns. That means:

- If you select one specific campaign, you’re measuring that campaign’s incrementality above the rest of the mix (including the rest of Meta).

- If you want to measure “Meta as a channel,” you need to include all relevant acquisition campaigns for the same product, country, event, and audience.

“Account-level” shouldn’t be assumed as a guaranteed feature for everyone. Operationally, it means the treatment includes everything relevant in Meta for the business question. If you test one campaign while similar campaigns keep running outside the test, you’re not measuring Meta as a full channel.

What to do with other Meta campaigns while testing

- If you want to measure a specific campaign: avoid very similar campaigns outside the test, or accept you’re measuring only that campaign’s marginal incrementality.

- If you want to measure Meta as a channel: include all relevant campaigns for the same scope (product/country/event/audience).

- If there are campaigns for another product, another country, or a clearly different audience: they can coexist, but review overlap.

How to read the results

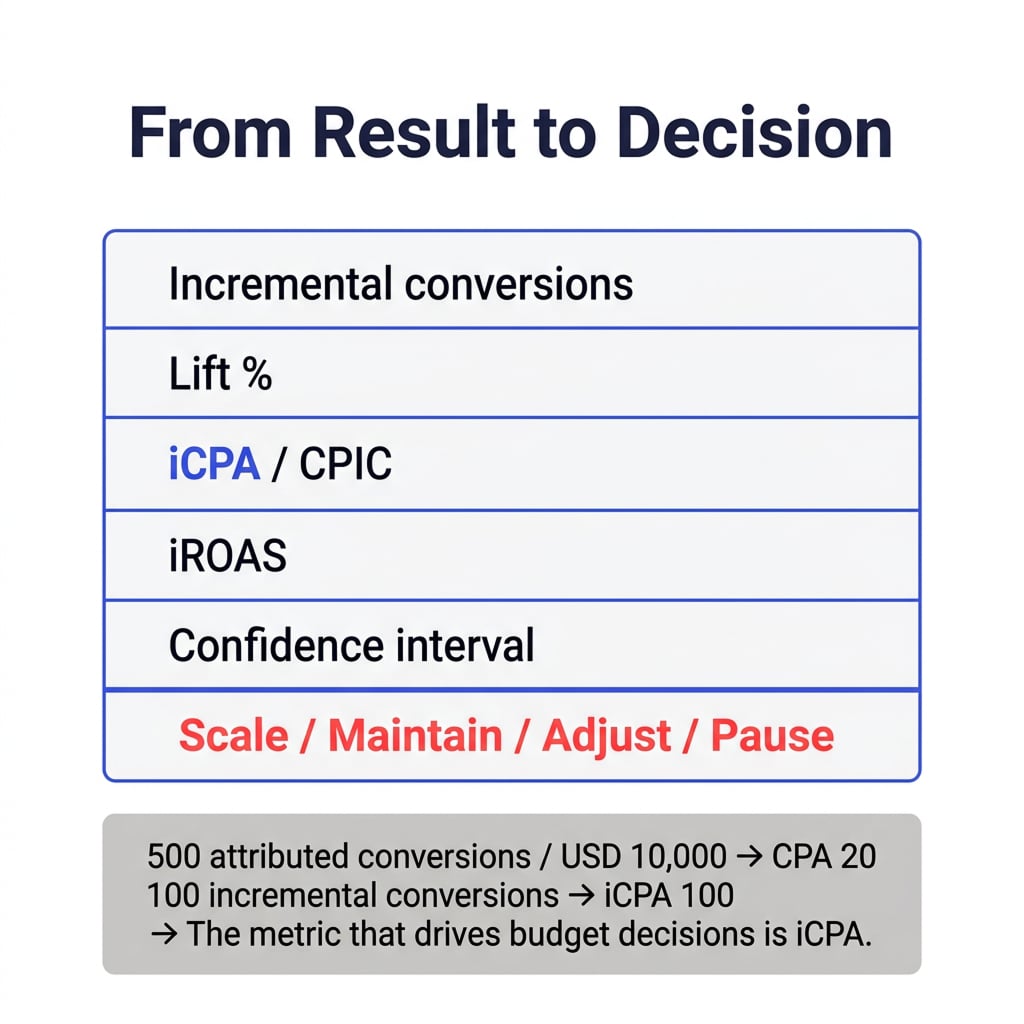

Conversion Lift returns the difference between test and holdout for the selected event, plus derived metrics like lift percentage. Knowing how to read them is what turns the experiment into clear, actionable decisions.

What to look at first

- Incremental conversions: how many additional conversions Meta generated.

- Lift %: how much the test group improved vs holdout.

- iCPA / CPIC (incremental cost per acquisition / cost per incremental conversion): how much each incremental conversion cost.

- iROAS (incremental return on ad spend): how much incremental revenue Meta generated, if your measurement supports it.

- Confidence interval: how reliable the estimate is.

- Volume: whether there was enough data to detect the effect.

The example that aligns Growth + Finance in 20 seconds

If Meta reports 500 conversions on USD 10,000 spend, the attributed CPA is USD 20.

But if the test shows only 100 incremental conversions, the real iCPA is USD 100.

That’s the metric that should drive budget decisions.

Practical reading ranges

There’s no universal benchmark. It depends on the business, brand, funnel, spend, audience, creative, tracking, and mix saturation. As an operational guideline:

- 0% or negative with good volume: concerning signal.

- 0% or low with low volume: inconclusive result.

- 3%–8%: can be reasonable in saturated ecosystems if iCPA/iROAS works.

- 8%–15%: healthy signal.

- +15%: strong signal.

- +20%: very strong result, especially with many channels active.

How to turn it into an operating rule

This is where many teams fail: they stop at the PDF and don’t convert it into a decision.

At Boomit, we usually translate it into a rule like:

- “For every $1 spent in this setup, I generate X incremental conversions for this event, at iCPA Y.”

- “This learning applies to this country, this funnel, and this creative set; outside of that, we re-validate.”

Then you use it to:

- Set an efficient spend ceiling.

- Reallocate budget between prospecting and remarketing.

- Justify incremental spend to Finance with a causal metric.

Andromeda and incrementality: what changes in practice

Andromeda is Meta’s ads retrieval system (the stage that selects ad candidates before the auction), designed to improve relevance and efficiency at scale.

So what changes for incrementality?

- Creative competes before it competes. If your creative system doesn’t feed real variation and strong signals, you may lose quality delivery early.

- The algorithm’s learning is more sensitive to real signals. That makes it even more valuable to separate “what the algorithm attributes” from “what actually increments the business.”

- Your question changes: it’s not only “which campaign has the best attributed ROAS,” but “which combination of creatives + event + structure generates incremental lift and scales without breaking efficiency.”

How we do it at Boomit

At Boomit we treat it as a system, not an isolated experiment. Our internal model is built on three pillars:

Baseline → Intervention → Learning

- Baseline: establish the baseline (what happens without the intervention). Define the events, windows, and segments that matter (e.g., first deposit in fintech).

- Intervention: execute a clear treatment (e.g., a new creative approach, switching optimization to a deeper event, or reallocating budget across campaigns).

- Learning: translate lift into spend decisions and an optimization backlog (measurement, creatives, landing/onboarding).

Which is supported by three layers we work on together:

- Marketing Data Engineering: events, signal quality, consistency.

- Data Analytics: result interpretation, useful cuts (country, OS, cohort).

- Performance Creative: real message/angle variety so the delivery system has material to learn from.

Common mistakes / What to avoid

Most incrementality tests “fail” due to design or execution, not because of the tool.

- Low volume: small audiences, low spend, or few conversions can make results inconclusive.

- Poor tracking: incomplete, duplicated, or delayed Pixel/CAPI/SDK/MMP/offline events harm measurement.

- Major commercial changes: promos, pricing, landing, funnel, or event changes during the test make interpretation harder.

- Unbalanced external actions: outside actions impacting test vs holdout differently can bias the comparison.

- Similar Meta campaigns outside the test: can expose holdout to similar stimuli and confuse interpretation.

Actionable checklist

Before launching (design)

- Define what decision you want to make with the result (scale, cut, change event, etc.).

- Decide whether you’re measuring a specific campaign or Meta as an acquisition system.

- Choose the right event: ideally real business value, not a shallow micro-event.

- Define in advance what lift, iCPA, or iROAS would be acceptable.

Measurement (instrumentation)

- Validate Meta is receiving events correctly: Pixel, CAPI, SDK, MMP, or offline events.

- Check duplicates, delays, and event consistency.

- Align the conversion window with the business’s true latency.

Volume and stability (execution)

- Estimate conversion volume, audience size, and spend (power).

- Keep budgets, creatives, landings, and promotions stable during the test.

- Document any unavoidable change.

Scope inside Meta (campaign hygiene)

- Review similar Meta campaigns that could be left outside the test.

- If you want to measure Meta as a channel: include all relevant campaigns for that product/country/event/audience.

- If you want to measure a single campaign: minimize overlap with BAU outside the experiment.

Conclusion

Meta incrementality isn’t a trend: it’s a budget management tool when attribution isn’t enough to make confident spend decisions. A well-designed Conversion Lift gives you causality — but only if you protect power, maintain reasonable stability, and align the design with the business question.

The mature conversation isn’t “does Meta work or not?” It’s: how much incremental value does Meta generate, at what cost, inside this specific ecosystem?

If you’re about to scale Meta spend (or you’re debating budget with Finance), strong incrementality measurement is often the bridge between perceived performance and real performance. At Boomit we run it as a continuous loop (measurement + creative + optimization) so learning turns into growth — not a report nobody uses.

If you’d like, visit our Growth Marketing Services landing page and get in touch with us; we can review your case and quickly tell you whether you’re ready to run a solid lift test, which hypothesis to test first, and how to turn the outcome into a spend rule.